How AI is breaking the SaaS business model.

I want to tell you about Thomas.

But first, I need to tell you about a phone call I got last Tuesday at 11pm.

My friend Karim — runs engineering at a mid-size fintech in Amsterdam — doesn't call me at 11pm. Karim sends polite LinkedIn voice notes at reasonable European hours. So when my phone rang, I knew something had either broken or died.

"We just cancelled 340 Salesforce licenses."

I sat up in bed. My wife gave me the look. The it's 11pm and you're about to have a work conversation in our bedroom look.

"Sorry — you cancelled how many?"

"Three hundred and forty. Not reduced. Not renegotiated. Cancelled. Done. I'm staring at the termination confirmation right now."

"So what's the team doing now?"

Long pause. I could hear him smiling through the phone.

"Same work. Different system. We deployed three agents, and Thomas is running the whole thing."

"Who's Thomas?"

"Thomas is the only guy on the team who actually understood what the CRM was doing. Not how to click the buttons — what the system was doing underneath. The logic. The data flows. The why."

Another pause.

"Turns out, when you have someone who understands the why, you don't need 340 licenses to get the work done. You need agents that can execute, one human who can think — and a team that's learning to work in a completely different way."

I didn't sleep well that night. Not because Karim's story scared me. Because I'd heard four versions of it that month. From different companies. Different industries. Different tools being replaced.

Same shift. Same question nobody seemed ready for: what does the human do now?

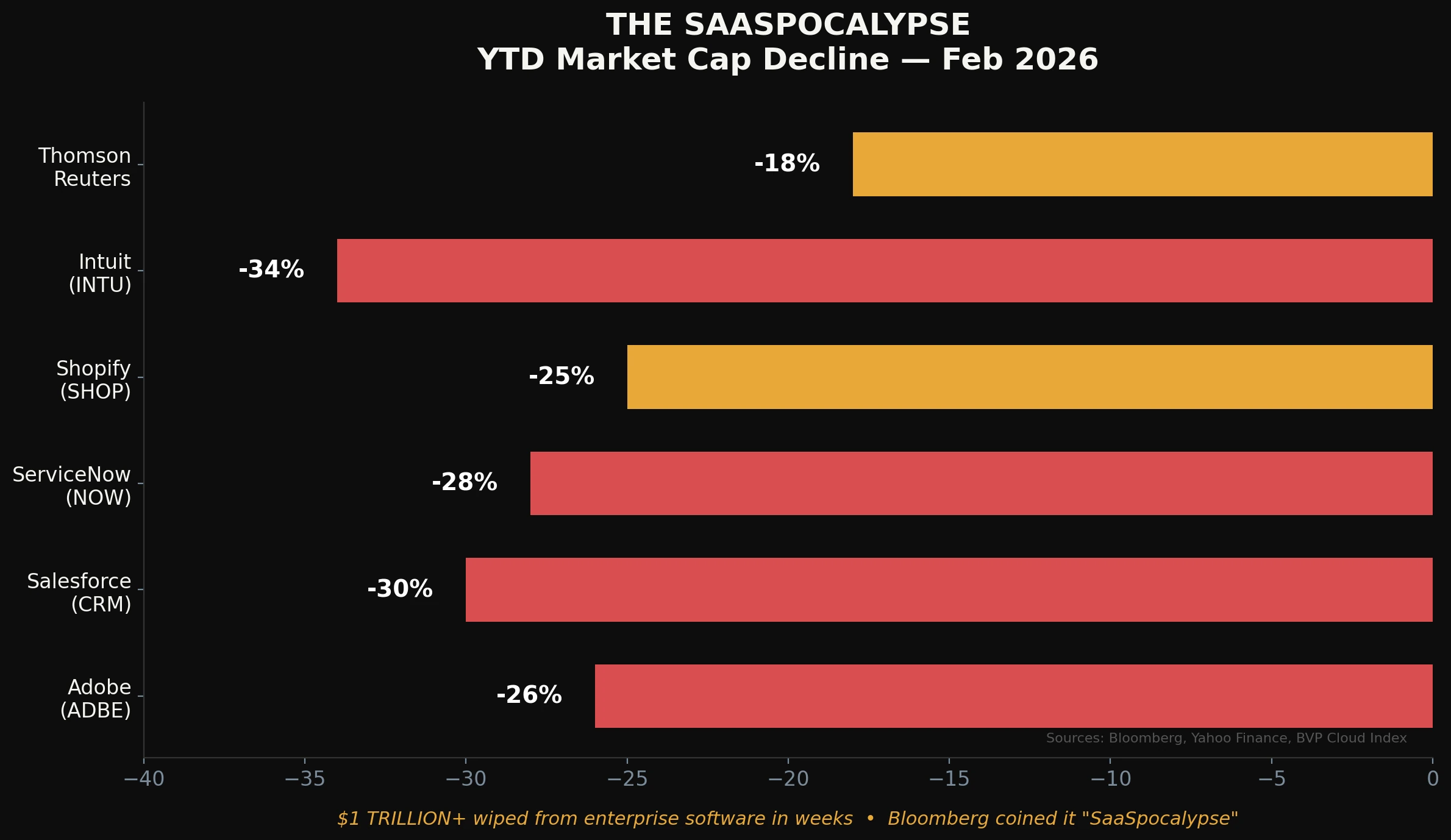

The Week the Market Caught Up

While you were going about your week, a number happened that should've been front-page news everywhere.

$1 trillion.

Adobe. Salesforce. ServiceNow. Shopify. And about a dozen others nobody's even talking about yet.

$1 trillion in market cap. Gone. Not over a year. Over a few weeks.

Not because of interest rates. Not because someone committed fraud. Not because the products got worse.

Because the market finally priced in something that people like Karim already knew:

If an AI agent can handle the workflow, you don't need to pay per seat for a human to click through it.

Let me say it a different way, because I want this to land:

The SaaS model — the model where you never actually own the software, where you rent the privilege of clicking buttons forever at an 80% gross margin — just collided with the one force it was never built to survive.

Intelligence that doesn't need a license.

Now — does that mean humans are out? No. Absolutely not. And I'll explain why in a moment. But first, let me show you what happened in two weeks. Because the speed is the story.

What Happened in Two Weeks

I'm going to walk you through seven things that dropped in the past two weeks. Not two years. Not two quarters. Two weeks.

And I want you to pay attention to the pattern, not just the products. Because the products don't matter. The pattern matters. The pattern is: everything is converging at the same time, from every direction.

OpenAI shipped Codex as a desktop app. A "command center for agents," they called it. Over 1 million downloads in its first week. A million. In seven days.

Here's what that means in practice: your boss — the one who can barely find his files — can now open an app, describe what he wants in plain English, and watch multiple AI agents build it in parallel. Then he forwards the result to you and says "hey, can you clean this up?"

That's a shift in who initiates the work. It doesn't mean the developer is gone. It means the developer's role just changed from "the person who builds from scratch" to "the person who reviews, polish, own, corrects, and makes sure it actually works with real users."

And here's the thing nobody talks about: that second role? It's harder.

It requires more judgment, higher expertise, and more ownership. Being "average" is not an option anymore…

Powering that app is Codex 5.3 — OpenAI's most advanced coding model yet. 25% faster. Multi-skilled. It doesn't just write code anymore. It does image generation. Research. Writing. It handles the full range of responsibilities that used to be spread across an entire product team.

One model. Doing the work of the designer, the researcher, the copywriter, and the developer.

Impressive? Yes. Ready to run unsupervised? Not even close.

I keep reading the phrase "AI won't replace you, someone using AI will replace you." I understand the sentiment. But I think the real shift is subtler: AI changes what your job IS, not whether you have one. The question isn't "will I be replaced?" The question is "am I ready for what my role is becoming?"

Meanwhile, Anthropic released Claude Opus 4.6 — and they're no longer just playing the coding game. They're coming for legal analysis. Financial modeling. Strategic planning. The kind of work that used to require a consultant with a fancy MBA and a $400/hour rate.

(I say this as someone who has been that consultant. I know what I'm watching shift.)

(I do all my AI work with it now, and it's not just for code — EVERYTHING.)

When a single model can analyze contracts, build financial projections, and write the code to automate both — the question for the enterprise SaaS vendor becomes: what are you charging for, exactly?

But here's what the hype merchants leave out: a model that can analyze contracts can also hallucinate clauses that don't exist. A model that builds financial projections can also produce numbers that look perfectly reasonable and are completely wrong. And when that output reaches a client, a regulator, or a board — someone is liable.

That someone is not the model. It's the HUMAN who signed off on it.

Which is exactly why humans aren't going anywhere. More on this in a minute.

Now here's where it gets interesting. Because so far, everything I've described comes from big Silicon Valley companies with closed models and expensive subscriptions. Easy to dismiss as a rich company's game.

But the open-source world just showed up to the funeral with flowers and a shovel.

Alibaba released Qwen 3 Coder Next. Open-weight. Self-hostable. A serious coding brain that you can run behind your own firewall.

Why does that matter? Because it kills vendor lock-in — the last thing SaaS companies could still count on.

Why rent five different dev tools at $49/month when you can host your own brain that rebuilds them all from scratch? In-house. Under your control. No subscription. No renewal negotiations. No "we're raising prices 40% because AI is expensive, sorry lol."

"But Charafeddine, self-hosting is complicated."

It was. Last year. It's getting less complicated every month. And when your company is burning $500K/year on SaaS seats, someone will figure out the hosting.

Then came GLM5 from ZAI — targeting complex systems engineering and long-horizon agentic work. Its performance matches and sometimes beats the best closed models.

And MiniMax M2.5 went viral because it delivers frontier-level intelligence at a fraction of the cost.

Read that again: frontier-level intelligence at a fraction of the cost.

We're approaching the point where those $200/month AI subscriptions start looking like the very SaaS model they're supposed to disrupt. Because if an open model running on a $3,000 GPU gives you 90% of the leading model's performance — who exactly is paying $200/month? And for what?

The moat isn't just shrinking. It's flooding.

And then Microsoft turned GitHub into something nobody expected.

GitHub Agent HQ. What used to be a code hosting platform is now a full AI agent orchestration system. Agents can open issues, generate branches, write code, run tests, and merge when everything passes. Autonomously.

Project management. QA. Code review. DevOps. One platform.

If you're a SaaS company selling project management, testing, or deployment tools — this isn't competition. This is absorption. Your product just became a feature in someone else's platform, and they're not going to charge per seat for it.

One more thing. The quiet one. The one that connects everything.

Waymo released their world model. Yes — Google's self-driving car company.

"Charafeddine, what does a car company have to do with SaaS?"

Patience.

Waymo's world model is about simulation and prediction at scale. It's how AI systems learn to model complex environments — make decisions, predict consequences, and act. Autonomously.

Now translate that into business.

Forecasting. Logistics. Supply chain. Risk modeling. Operations planning.

Every one of those things currently lives inside a SaaS dashboard that shows you data and lets you decide. The world model approach doesn't show you anything. It understands the data and acts.

That's not an upgrade to your dashboard. That's a replacement for the concept of a dashboard.

The Pattern

Seven developments. Two weeks. And they all say the same thing:

Intelligence is getting better (Codex 5.3, Opus 4.6).Intelligence is getting cheaper (MiniMax M2.5, GLM5).Intelligence is getting open (Qwen 3, GLM5).Intelligence is getting orchestrated (GitHub Agent HQ, Codex App).Intelligence is getting autonomous (Waymo World Model).

Better, cheaper, open, orchestrated, autonomous.

Five arrows. All pointing in the same direction. All arriving at the same time.

And at the end of those five arrows is one conclusion:

When intelligence becomes abundant, software stops charging per human.

But Here's What the Panic Merchants Miss

OK. This is where most newsletters, YouTube videos, and Twitter threads stop. "SaaS is dead! AI is replacing everyone! We're all gonna die! Subscribe for more doom!"

I'm not going to do that to you. Because the doom version isn't just lazy — it's wrong.

Let me explain why with a story I keep telling my students.

Last semester, I gave my class an assignment: use an AI agent to build a small internal tool. Any tool. Full autonomy. The only rule: it has to work correctly in a production-like environment.

Every single student delivered something that looked great.

Not a single one delivered something I'd trust with real data.

The interfaces were polished. The code compiled. The demos were impressive. But when I started poking at edge cases — malformed inputs, conflicting business rules, ambiguous requirements — every single tool fell apart. Quietly. Confidently. The AI had produced output that looked correct but wasn't. And none of the students had caught it, because they didn't know what "wrong" looked like in that context.

That's the trust problem.

And it's not a bug that's getting fixed in the next model release. It's structural. AI systems generate statistically plausible output. Plausible and correct are not the same thing. Plausible and trustworthy are definitely not the same thing.

When the output is a social media post, plausible is fine. When the output is a financial report going to regulators, a legal brief going to court, or a medical recommendation going to a patient — plausible is a liability.

Someone has to own the output. Someone has to be accountable. Someone has to look at it and say: "This is right" or "This is wrong" — and stake their reputation on it.

That someone is not an AI agent. That someone is a human.

And that is why the "AI replaces everyone" narrative is both lazy and dangerous. It misunderstands what AI actually does. AI doesn't replace responsibility. AI generates output. Responsibility stays with the human who approves it.

The seat is dying. The human isn't. Because trust doesn't scale without humans in the loop.

Now Let's Go Back to Thomas

When Karim restructured his team around agents, the first thing his CFO asked was: "So we're saving all that software money and we need fewer people?"

Karim's answer: "No. We need different people. And we need Thomas more than we've ever needed anyone."

Here's what Karim understood that most companies don't:

Cancelling 340 licenses didn't eliminate 340 jobs. It eliminated 340 instances of a human doing work that a machine can now do faster. The humans are still there. But their work changed overnight.

Some of them are adapting. Learning to direct agents. Learning to review outputs. Learning to ask "is this actually right?" instead of "is this formatted correctly?"

Others are struggling. Not because they're not smart — because nobody ever taught them how to think about the system. They spent years mastering the interface. They never learned the logic underneath.

And Thomas? Thomas is the one who always asked why.

Not how to click buttons. Not which menu to find the export function. Not the keyboard shortcuts.

Thomas understood why the CRM existed. What data flows where and what it means. Which edge cases matter and which ones don't. When a number looks wrong before he can even explain why. What the business needs tomorrow, not just what it needed yesterday.

Thomas is the person who can look at an AI agent's output and say: "This is wrong. Row 47 doesn't make sense. The model is using last quarter's pricing logic. Fix it."

The agent can process the work of 340 people. But it can't do that. It doesn't know what "wrong" looks like. It doesn't have the context. It doesn't understand the business in its bones the way Thomas does.

And crucially — when that agent's output goes to a client and it's wrong, it's Thomas's name on the line. Not the agent's.

That's trust. That's accountability. That's the part that doesn't get automated.

"But Charafeddine, I'm Not Thomas."

I know. Most people aren't. Yet.

But "being Thomas" isn't a talent you're born with. It's a decision you make every day. It's the decision to ask why instead of how.

Why does this workflow exist?Why do we measure this metric?Why does this data flow from here to there?Why did we build it this way and not that way?

Most people never ask. They learn the interface. They follow the process. They get very, very good at operating a machine they don't understand.

And that was fine — when the machine was stable.

When the machine changes every two weeks (see: the seven developments above), the only people who thrive are the ones who understood what the machine was for.

This isn't about "surviving AI." It's about evolving from a software operator to an intelligence operator. The tools change. The thinking doesn't.

What I'd Do If I Were You

I'll keep this practical.

1. Understand the logic, not just the tool.

This week, pick one tool you use every day. Not the AI — the boring SaaS tool. Your CRM. Your project manager. Your analytics platform.

Now ask yourself: if this tool disappeared tomorrow, could I explain to an AI agent exactly what it was doing and why? Could I describe the data model? The business rules? The edge cases?

If the answer is no, that's your homework. Because the tool will change. Maybe not tomorrow. But sooner than you think.

2. Build the skill that can't be automated: judgment.

Every SaaS license that gets cancelled is a workflow that's being automated. Every Thomas who becomes more valuable is a person who can tell you whether the automated output is trustworthy.

That's the skill. Domain knowledge. Business judgment. The ability to look at an AI output and know — in your gut, before you can even articulate why — that something is off.

That gut feeling is not magic. It's the residue of years of paying attention to the logic, not just the interface.

AI can generate every report. AI cannot tell you whether the report matters. AI cannot tell you whether the numbers are right in this specific context. AI cannot pick up the phone when something breaks and explain to a client why it happened and what you're doing about it.

That's you. That's the job. And it's more important now, not less.

3. Audit your tool stack — but don't panic-cancel.

I did this exercise with a client last month. 23 SaaS tools. $180K/year. We replaced 9 of them with three agent workflows in under two weeks. But — and this is the part people skip — we spent more time designing the human oversight layer than we did building the agents.

Because agents without oversight aren't a solution. They're a liability with a nice interface.

The point isn't to cancel everything tomorrow. The point is to understand what each tool actually does and who's responsible for verifying the output when you change how it gets done.

The Number That Keeps Me Up

$300 billion.

That's roughly how much the SaaS industry generates annually from per-seat pricing.

AI agents don't need seats.

That $300 billion isn't going to disappear. It's going to move. From software licenses to AI infrastructure. From seat counts to orchestration platforms. From clicking buttons to building systems.

But here's the nuance everyone misses: a significant chunk of that money will move toward the humans who make the new systems trustworthy. The Thomas budget. The "who's accountable when the agent gets it wrong?" budget. The training, oversight, and quality control budget.

The money moves. The question isn't whether humans are part of the equation. They are. The question is whether you are the human the money moves toward — or the license that gets cancelled.

The End of the Seat

I'll close with this.

Karim called me again yesterday. More reasonable hour this time.

"Thomas got promoted."

"To what?"

"We made up a title. 'Head of AI Operations.' He's the person who makes sure the agents do what the business actually needs — and catches them when they don't."

"How's he handling it?"

"He's terrified. He told me he doesn't feel qualified."

I laughed. "That's exactly why he's qualified. The people who feel qualified are the ones who think they already know how AI works. Thomas knows he doesn't — which means he's actually paying attention."

Another pause.

"You know what he told me last week? He said his job used to be working in the system. Now his job is making sure the system works. Same company. Same data. Completely different responsibility."

Same human. Different role. Infinitely more valuable.

The seat is dead.

Not because humans are useless — but because the seat was never a good measure of human value in the first place. It measured presence, not judgment. Clicks, not thinking. License count, not trust.

AI agents don't need seats. They need direction. They need oversight. They need someone who understands the difference between a plausible output and a trustworthy one.

Seven things happened in two weeks. A trillion dollars moved. And every single one of those developments points at the same truth:

When intelligence becomes abundant, the human who is the source of will and makes it trustworthy becomes the most valuable person in the room.

Not the person with the most tools. Not the person with the most seats. Not even the person with the best AI subscription.

The person who thinks. The person who checks. The person who puts their name on it.

That's always been the answer. Before AI. During AI. After whatever comes next.

AI is only as good as the human operating it.

Be Thomas.

Have a great weekend.

— Charafeddine (CM)