Articles & Playbooks

Deep Agents Explained: Long-Running AI Agents Beyond Tool Calling.

We have all had this moment.

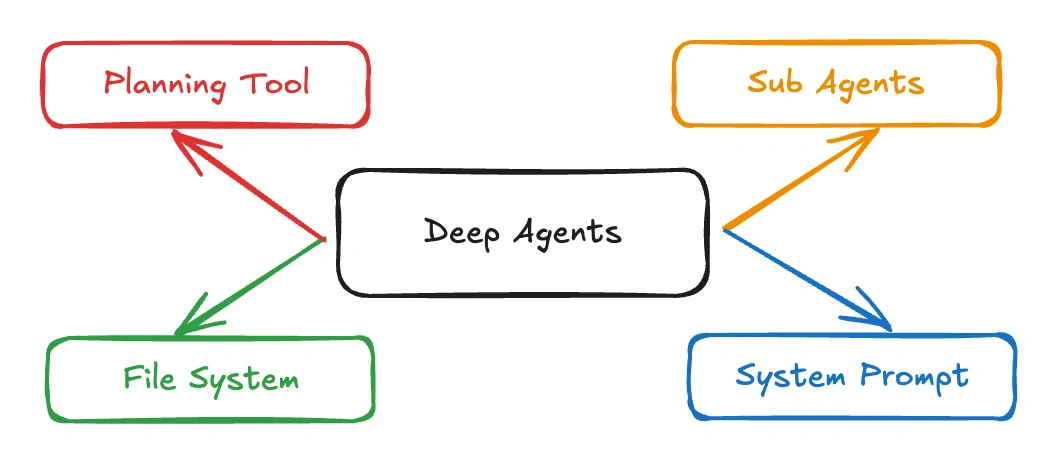

An AI agent looks brilliant for five minutes. It answers a question, calls a tool, maybe even writes a neat little script. Then we hand it a task that looks more like real work than a benchmark: research a market, organize the findings, keep track of what matters, split the task into branches, come back with a coherent answer, and do not get lost halfway through. That is usually when the magic trick starts to creak. Deep Agents exist for exactly that moment. LangChain describes Deep Agents as an open-source “agent harness” for complex, multi-step work, with built-in planning, file systems for context management, subagent spawning, and long-term memory.

A lot of agent systems do not fail because the model is weak. They fail because the task is bigger than one clean loop of “think, call tool, answer.” Deep Agents are built on LangChain and use the LangGraph runtime for durable execution, streaming, persistence, and human-in-the-loop support, which is LangChain’s way of saying: this is for work that keeps going after the first clever response.

The shift from agent demos to agent work

The easiest way to understand Deep Agents is to compare them to the kind of agent most teams build first. A simple agent is fine when the job is short, linear, and easy to keep inside one context window. Deep Agents are aimed at the opposite category: long-running, non-deterministic, multi-step tasks where the system has to plan, manage large amounts of context, delegate, and remember what it learned. LangChain’s docs say that directly: use Deep Agents when you need planning and decomposition, filesystem tools for large context, subagents for context isolation, and memory across threads or conversations.

That matters because most useful work is not one-shot work. Research is not one-shot. Coding is not one-shot. Technical analysis is definitely not one-shot. The moment a task branches, evolves, or runs long enough to drown in its own transcript, you need more than tool calling. You need structure. Deep Agents are interesting because they package that structure into the harness itself instead of asking every team to reinvent it badly on a Friday afternoon.

What Deep Agents actually are

LangChain’s own wording is useful here: Deep Agents are an “agent harness,” not just a single prompt pattern. The harness includes built-in planning, a virtual filesystem, subagent delegation, smart defaults for tool use, and context management such as auto-summarization and saving large outputs to files. The Python package is deepagents, and the simplest quickstart is literally from deepagents import create_deep_agent, then agent.invoke(...).

That “batteries-included” angle is not marketing fluff. LangChain’s own overview page now recommends starting with Deep Agents if you want to build an agent and want modern features like automatic compression of long conversations, a virtual filesystem, and subagent spawning. If you do not need those capabilities, or want something more custom, they point you back toward plain LangChain or lower-level LangGraph workflows.

In other words, Deep Agents sit in a very practical middle ground. They are more opinionated than building from scratch, but less rigid than a narrow productized agent. That is usually where useful engineering lives.

Why ordinary agents struggle with deep work

They lose the plot

Long tasks tend to become context junkyards. A useful thought from forty turns ago gets buried under tool logs, retries, partial summaries, and accidental repetition. Deep Agents address this with filesystem tools like ls, read_file, write_file, and edit_file, plus context management that can summarize long conversations and offload large outputs to files. LangChain highlights this explicitly in both the docs and the repo README.

This is one of the deepest truths in agent design: many “reasoning failures” are really context failures. The model is not always confused. Sometimes it is just trapped in a room full of its own notes.

They do not plan enough

Deep Agents include a built-in write_todos tool for task breakdown and progress tracking. That sounds simple, but it is exactly the kind of scaffolding complex work needs: split the task, keep state, update the plan when new information arrives. LangChain positions planning and task decomposition as one of the core capabilities of the framework.

That changes the feel of the system. Instead of reacting one step at a time, the agent can turn a vague goal into a working agenda. “Research this market” becomes a plan. “Refactor this codebase” becomes a queue of deliberate moves. We know, that does not sound glamorous. It sounds better: it sounds useful.

They try to do everything in one brain

Deep Agents include a built-in task tool that lets the main agent spawn subagents for delegated work with isolated context. The docs are very clear that this is about keeping the main context clean while still allowing the system to go deep on specific subtasks.

That isolation is a bigger deal than the phrase “multi-agent” sometimes suggests. The value is not just having more little AIs running around like caffeinated interns. The value is being able to isolate branches of work so one line of reasoning does not pollute the whole session. That is not trend-chasing. That is context hygiene.

They forget what was already learned

Deep Agents can be extended with persistent memory across threads using LangGraph’s memory store, and the CLI version keeps memory and context across sessions while learning project conventions and reusable skills. That means the agent is not forced to relearn your preferences or your codebase every time it wakes up.

This is where long-running systems start to feel less like “smart autocomplete with ambitions” and more like something that can accumulate working knowledge. Not perfect memory. Useful memory. Those are very different things.

The four core capabilities that make the framework matter

1) Planning and task decomposition

LangChain ships Deep Agents with write_todos, a built-in planning tool that helps break complex tasks into steps and track progress over time. That is not a side feature. It is one of the central reasons the framework exists.

A good way to think about it is this: short tasks benefit from reasoning; long tasks benefit from reasoning plus bookkeeping. Deep Agents acknowledge that plainly.

2) Filesystems for context management

The virtual filesystem is the quiet hero of the whole design. Deep Agents can use in-memory storage, local disk, LangGraph store for persistence across threads, or isolated sandbox backends such as Modal, Daytona, and Deno. The point is not just file I/O. The point is having a place to put context so the conversation itself does not have to carry every intermediate artifact forever.

3) Subagents for isolation and delegation

The task tool lets the agent delegate to subagents with isolated context windows. This is ideal for independent research branches, code investigations, comparison tasks, and any work that should not blow up the parent context.

This is where the framework starts feeling like an actual work system. One agent can keep steering, while others go off and do bounded pieces of work. That is not only faster. It is cleaner.

4) Long-term memory and skills

Deep Agents can be extended with long-term memory, and LangChain also supports reusable “skills” that package specialized workflows and domain knowledge. The CLI docs describe these skills as customizable expertise the agent can use over time.

That combination is powerful because it turns repeated effort into compound effort. The first time the agent learns your conventions, it is overhead. The fifth time, it is leverage.

Where Deep Agents shine in the real world

Research

Deep research almost never fits in one response. It involves gathering sources, extracting findings, identifying gaps, revising the plan, storing notes, and eventually synthesizing a result. LangChain explicitly positions Deep Agents for long-running work like research.

This is the kind of task where a basic agent often looks good in round one and drifts by round five. Deep Agents are built for the whole loop.

Coding

LangChain ships a Deep Agents CLI specifically as a terminal coding agent. It supports file operations, shell execution, web search, HTTP requests, planning, memory, skills, MCP tools, and approval controls for sensitive operations.

That is a serious list. It means the framework is not just saying, “sure, you could probably use this for coding.” It is saying, “we built a coding interface on top of it because this is one of the obvious use cases.” And that makes sense, because coding is exactly the kind of work that benefits from planning, persistence, and delegated investigation.

Multi-step analysis

Due diligence, debugging, literature review, architecture audits, product tear-downs, competitive analysis, migration planning. These are not merely “answer generation” tasks. They are work-management tasks with a reasoning component. Deep Agents are compelling because they treat them that way.

A tiny official example, and what it teaches us

The official overview shows a minimal Deep Agent example:

from deepagents import create_deep_agent

def get_weather(city: str) -> str:

"""Get weather for a given city."""

return f"It's always sunny in {city}!"

agent = create_deep_agent(

tools=[get_weather],

system_prompt="You are a helpful assistant",

)

agent.invoke(

{"messages": [{"role": "user", "content": "what is the weather in sf"}]}

)That snippet is intentionally simple, but it reveals the philosophy: the interface is easy, the harness is opinionated, and the extra capabilities are already there when the task gets deeper. LangChain’s customization docs show that create_deep_agent can be configured with model, tools, system prompt, middleware, subagents, backends, human-in-the-loop, skills, and memory.

This is exactly what many teams want: start with a working agent, then add complexity where it matters, instead of starting from zero and building your own fragile tower of tools, retries, and context hacks.

Deep Agents vs simpler agent frameworks

The cleanest comparison is not “better or worse.” It is “for what kind of work?”

If the task is short, predictable, and easy to keep in one thread, simpler agents are often enough. LangChain says as much: for simpler agents, consider create_agent, or build a custom LangGraph workflow when you want heavier customization and a mix of deterministic and agentic behavior.

Deep Agents are the better fit when the task is long, open-ended, stateful, and likely to blow up a normal context window. LangChain’s own recommendation is telling: if you want a batteries-included agent with long-conversation compression, a virtual filesystem, and subagent spawning, start with Deep Agents.

That is a useful boundary. Not every problem needs the overhead of a deeper harness. But the problems that do, really do.

The engineering lesson hiding underneath

The strongest lesson in Deep Agents is not “agents should be more autonomous.”

It is this:

Autonomy without structure gets messy fast.

Deep Agents work because they force structure into the problem:

- plan the work

- isolate the work

- store the work

- compress the work

- remember the work

That is why the framework feels more mature than a lot of agent demos. It treats long-running agency like a systems problem, not just a prompt problem.

And honestly, that is where the whole field is heading. The next generation of useful AI systems will not just be the ones that can call a lot of tools. They will be the ones that can survive complexity without collapsing into transcript soup.

The caveat nobody should skip

The more capable an agent becomes, the more important boundaries become.

The Deep Agents GitHub repo is blunt about this: enforce boundaries at the tool and sandbox level, not by expecting the model to self-police. That is one of the most important security lines in the entire ecosystem, and it deserves to be said louder.

So yes, Deep Agents are powerful. But if you give them weak tools, sloppy access control, vague boundaries, or unsafe execution surfaces, you are not building sophistication. You are building a prettier accident.

That is especially relevant because the CLI includes shell execution, web access, HTTP requests, file editing, and delegated subagents. Those are exactly the kinds of capabilities that make an agent useful and exactly the kinds that need thoughtful approval and sandboxing.

Key takeaways

Deep Agents are LangChain’s open-source “agent harness” for complex, multi-step, long-running work, built on LangChain and the LangGraph runtime. They are designed around planning, filesystems for context management, subagents for delegation, and long-term memory.

Their biggest contribution is architectural, not cosmetic. They address the real failure modes of advanced agents: poor planning, context overload, weak persistence, and trying to do everything in one thread.

They shine in research, coding, and other deep knowledge work where the task evolves over time and the system needs to keep its bearings. The Deep Agents CLI makes that especially concrete by packaging coding-friendly tools, memory, approval controls, and context compaction into a ready-to-run interface.

They also demand discipline. Tool boundaries, sandboxes, human approval, and memory hygiene matter more as capability grows. The framework is honest about that, and that honesty is one of its strengths.

The most useful way to think about Deep Agents is this:

They are what happens when we stop asking agents to merely respond, and start asking them to actually work.

— Cohorte Intelligence

March 20, 2026.